Drones aloft

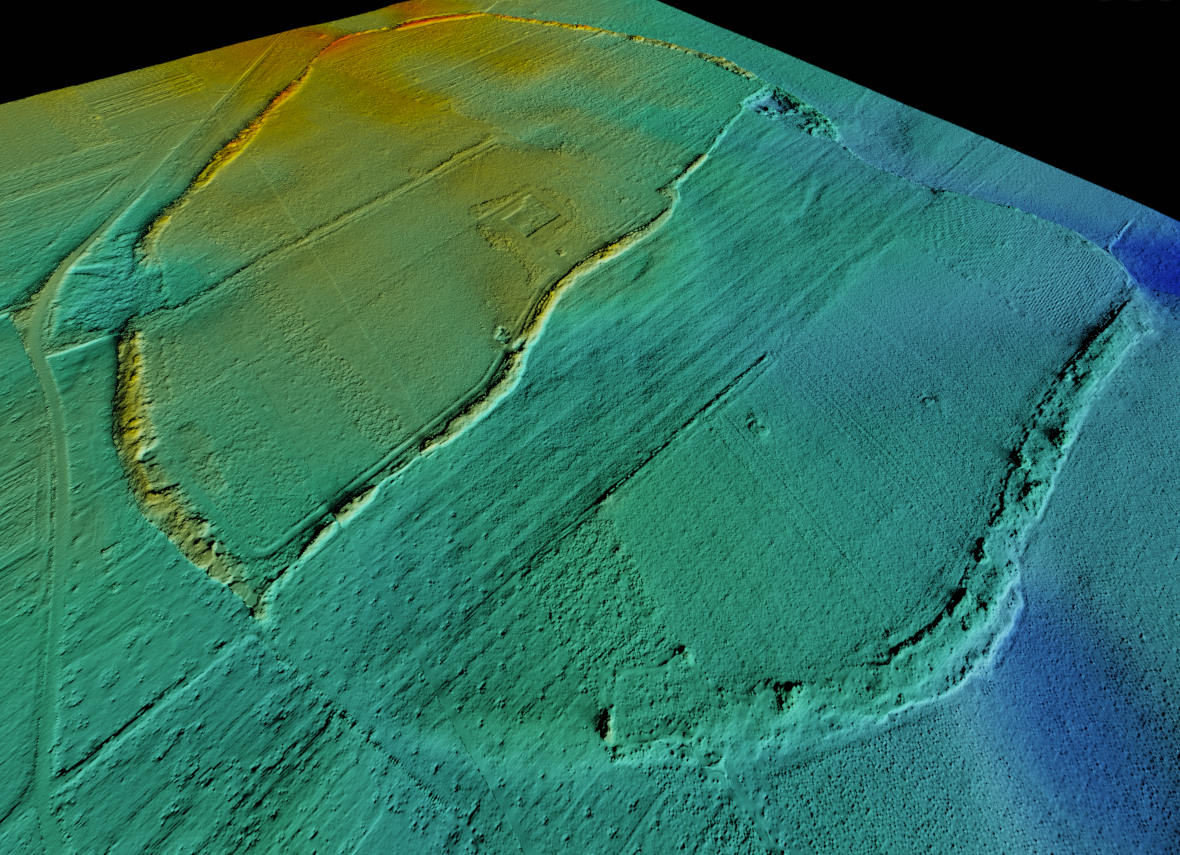

While a better understanding of this extensive area is key to answering important historical questions, we also have a lot to learn from the process of researching it, not only in developing and finetuning our methods, but also in mapping the area and thereby complementing earlier survey and excavations. This is done by collecting large numbers of images with sufficient overlap and processing these into detailed 3D models that can be further analysed but also offer new visualisations. Using dGPS measured targets (mounted onto 1x1m aluminum plates) that can be easily identified in the images, these models have a high level of precision and can be placed in a GIS (Waagen 2019). In order to contribute to our understanding of the area as part of the study of the field survey data, we have focused our efforts on six areas or test sites, also included in the survey. Four of these (A–D) contain clusters of tumuli, giving the possibility to take a detailed look at these sites, while not losing sight of potential ‘off-site’ remains. The remaining two test sites are settlements: a small prehistoric settlement site located on the southern end of the Voulokaliva (site 35), and the Classical/Hellenistic settlement at the nearby tell-site of Magoula Plataniotiki, where excavations have been ongoing since 2013.

Thermography

Our approach uses thermal infrared imaging as a remote sensing technique. By measuring minute temperature differences on the surface, an advanced thermal camera can document material differences in the soil, including potential subsurface archaeological remains (Casana et al. 2017). Think of the fact that on a sunny day, a car can turn hot enough to fry an egg on its hood, but a grassy lawn would still stay cool. This is simply because some materials can easily heat up in the sun (due to their high thermal conductivity), and retain and emit a lot of heat (i.e. high heat capacity and thermal emissivity), while others (notably water) are more resistant to temperature fluctuations (something known as thermal inertia). Because different materials, due to variation in composition, density, moisture content, conductivity of the matrix etc, respond differently to temperature fluctuations, we can observe differences in the pace at which they heat up and cool down again. Such differences result from the diurnal flux (the difference between day and night), but are also affected by longer term (weeks) temperature fluctuations.

An Experimental Workflow

As the reflection of sunlight has a large impact on the measurable thermal radiation, the theory prescribes that it is best to fly between sunset and sunrise. Because the clarity of a thermal signal results from a variety of factors, our strategy is to collect data at different times during the night (after sunset, in the middle of the night and before sunrise) and in different times of the year (July, November and April). Collecting data under all these different circumstances allows us to systematically compare the images that we have. This is a versatile approach because comparing images recorded under different circumstances helps us to understand and interpret what we are seeing, but also because it enables us to understand what conditions are most favourable for recording clear thermal signals at Halos.

At the same time, a systematic approach of this sort is also labour and data intensive. In the summer, nights are already short, but when you go flying about with a drone, they become challengingly short. And when not out in the field, we do administration work – keeping records for each and every flight, diaries of different sorts, and storing and (if possible) processing images.

Bad Weather

The preliminary processing of images is quite important, as we discovered last November. Having arrived to Greece after days of pouring rain, the clay-rich soil was completely saturated. With a limited diurnal temperature flux (between 10 – 14° C.) and no direct sunlight during the day, we soon discovered that the thermal imagery recorded on our first evening flights did not contain sufficiently distinctive information to enable proper photogrammetric processing. This forced us to adapt our strategy, but for the better. We decided to take high-altitude thermal overview photos, while focusing on specific test sites.

Multispectral Imaging

Luckily, we had other possibilities as well. Another sensor we use is a multispectral camera. More commonly used in agriculture, this camera captures images at different wavelengths of light, thereby enabling the visualisation of differences in vegetation, crop health and soil humidity. Think of the fact that subsurface archaeological features might impede or stimulate plant growth, or could prove favourable for certain plant species at the cost of others. The resulting patterns, known as crop marks, have long been studied through traditional aerial photography. Multispectral images are not only complementary to what can be observed in normal images, but provide a much more specific, diverse and detailed image. Paired with thermal imaging, this is already proving its worth as an effective prospection method (Agudo et al. 2018; Sagaldo Carmona et al. 2020).

In addition to a detailed 3D optical model of the whole Voulokaliva site, produced in November as well, the datasets we collected offer detailed remote sensing data of this extensive funerary site. One additional week of fieldwork is planned for early April, after which we can fully compare and analyse the different visualisations. So, to be continued.